Download the e-book here.

Explore our AI resources.

But even this seismic shift may only be a warmup act for the AI era that is starting to unfold at breakneck speed. Recent rapid advancements in AI have led to models with emergent capabilities, like logical reasoning, far ahead of when these breakthroughs were forecast and to the surprise of many of the field’s most influential pioneers. And forward development of AI is now being enabled by models that are helping create AI’s two vital ingredients: data and processing power. By helping generate datasets and design enhanced processors, AI is enabling the training of even more capable AI models, like a flywheel spiraling recursively upwards.

Even in the most conservative plausible scenarios of future AI development, including no further breakthroughs like the discovery of artificial general intelligence (where a digital mind rivals human intellect across all domains, the stated aim of leading AI labs), recent advances have set the stage for transformation of profound scale and pace.

These advances are, for the first time, creating entities with sensing and decision-making capabilities that rival humans in all manner of tasks, including routine ones like driving cars, strategic ones like generating business scenarios, creative ones like composing music, and analytical ones like valuing houses.

Yet AI’s potential goes far beyond replicating human tasks, to encompass tackling previously intractable “grand challenges,” ranging from nuclear fusion to climate change and food security. One example is protein folding, where in 2021 Google DeepMind announced it had predicted the structure of almost every known protein. This is accelerating discoveries across nearly every field of biology, from precision medicine to enzymes for breaking down plastic waste.

AI’s expected near-term impact alone is startling. Various forecasts have predicted annual gains of as much as $15 trillion to global economic output by 2030,1,2,3 equivalent to the combined output of Japan, Germany, India, and the U.K., collectively 15% of the $100 trillion world economy today. Estimates based on recent advances in generative AI and other technologies suggest activities accounting for up to 30% of current employee hours in the U.S. could be automated by 2030, rising to as much as 70% beyond then.4,5

In the so-called AI arms race, governments worldwide are declaring leadership ambitions and vying to capture upsides by cultivating domestic AI industries and enabling infrastructure like supportive policy frameworks, semiconductor foundries, and even national supercomputers for training proprietary AI models. Simultaneously, they are scrambling to understand and mitigate downside risks by studying AI safety and modeling potential societal dislocations, amid what may constitute a pivotal moment in human history akin to the Industrial Revolution or even the advent of agriculture.

AI’s Emergence as a General-Purpose Technology

How Leaders Can Build an AI-Ready Culture

The term “artificial intelligence” was coined in 1955 in a proposal for a Dartmouth College research project “to find how to make machines use language, form abstractions and concepts, solve kinds of problems now reserved for humans, and improve themselves.” That project, whose aims are now a reality, saw the emergence of AI as a distinct field. A machine’s ability to perform tasks requiring expert knowledge was first demonstrated in 1965 by Stanford University’s Dendral, an early AI system that could suggest possible molecular structures for organic compounds. The ability of machines to outperform human intelligence in specific domains was proven in 1997, when IBM’s Deep Blue defeated the reigning world champion at chess.

But surges of promise and investment in the twentieth century were often met with subsequent disappointments, leading to periods of stagnation known as “AI winters.” Progress and adoption were constrained by high development costs, limitations in past AI architectures that depended on domain-specific rules and knowledge being programmed—confining systems like Dendral and Deep Blue to single functions like predicting molecular structures and playing chess—as well as short supply of computational power and data.

Twenty-first century expansion of the digital economy has attenuated those historical challenges and seen various fields of AI become a longstanding feature of daily life, with more than half of companies sampled in some surveys reporting use of AI in at least one business function, dropping to 3% in five or more functions.6

The convergence of vast data and computational power together with modern AI architectures—including deep learning neural networks inspired by the workings and flexibility of the human brain—has propelled AI to embody a broader range of advanced capabilities and applications. This includes the advent of so-called foundation models, which are large systems trained on vast quantities of diverse data, with large language models like OpenAI’s GPT-4 being one type. Foundation models not only perform a wide variety of functions—just as readily summarizing a 100-page technical report on battery manufacturing as finding weaknesses in legal contracts or tailoring a meal plan to a family’s dietary requirements and budget—they serve as a base for further fine tuning and adaptation to specific tasks or applications. For example, Google’s Med-PaLM 2 has been tuned from its foundation models to answer medical questions. Salesforce’s Einstein GPT leverages OpenAI’s foundation models to generate content for marketing, sales, and customer service professionals.

The convergence of vast data and computational power together with modern AI architectures—including deep learning neural networks inspired by the workings and flexibility of the human brain—has propelled AI to embody a broader range of advanced capabilities and applications. This includes the advent of so-called foundation models, which are large systems trained on vast quantities of diverse data, with large language models like OpenAI’s GPT-4 being one type. Foundation models not only perform a wide variety of functions—just as readily summarizing a 100-page technical report on battery manufacturing as finding weaknesses in legal contracts or tailoring a meal plan to a family’s dietary requirements and budget—they serve as a base for further fine tuning and adaptation to specific tasks or applications. For example, Google’s Med-PaLM 2 has been tuned from its foundation models to answer medical questions. Salesforce’s Einstein GPT leverages OpenAI’s foundation models to generate content for marketing, sales, and customer service professionals.

Dall-E 3: A mosaic art of a giant AI hand emerging from a data cloud, with each tile symbolizing a distinct AI function or application.

Foundation models, and the sophistication and versatility of modern AI technology more broadly, mean that AI has emerged as the twenty-first century’s general-purpose technology. And it has entered the exponential phase of its development curve. Annual global patent filings for AI technologies grew at a compound annual rate of 87% in the five years from 2016 to 2021, up from 19% in the preceding five years.7

While future approaches to making more capable AI may vary, “bigger is better” has fueled progress to date and seen astronomical increases in the scale and performance of the best AI models. Consider, for example, that OpenAI’s GPT-4 released in March 2023, was developed with 75,000 times more parameters (analogous to knobs for tuning a model’s performance) and computing power than Google’s BERT-Large, a cutting-edge model when introduced in 2018. In the last 10 years, the amount of compute used to train the best AI models has increased by a multiple of 5 billion, from two petaflops to 10 billion petaflops, and supercomputers capable of powering models several hundred times the size of GPT-4 are planned to come online in 2024. Similarly, the cost of training models that are equivalent to GPT-3, which OpenAI released in the summer of 2020, has since fallen tenfold.

More practically, rapid innovation and launches from both AI labs and technology giants including OpenAI, Anthropic, Google, and Meta have delivered step-change advances in large language models’ capabilities. These include understanding context, emotion, and nuance in language; logical reasoning and planning; mathematics; creativity; mass data processing; customizing responses to user preferences and circumstances; and generating multiform outputs like tables, charts, audio, and video that are less likely to exhibit inaccuracies, bias, or harmful content.

Their performance in tests of theory of mind—the thus far considered uniquely human ability to sense others’ unobservable mental states including their knowledge, intentions, beliefs, and desires—went from virtually zero in 2019, to 40% or equivalent to that of 3.5-year-old children in May 2020, to 70% in January 2022, and 95% in March 2023.8

But as with all general-purpose technologies, far more important than the development of core AI technologies themselves is the tidal wave of AI-enabled innovation that has just started sweeping through industries, reinventing customer experiences and shifting paradigms in everything from healthcare and energy to retail and media.

The Corporate Agenda

In this context, AI has rapidly ascended to the forefront of leadership agendas, with the percentage of S&P 500 corporations mentioning it in earnings calls sharply increasing, and average mentions per call as much as doubling quarter-over-quarter through recent quarters.

Recent surveys of US and global CEOs from across industries indicate that:

- 75% believe that future competitive advantage will depend on who has the most advanced generative AI.9 Just 13% believe the potential opportunity of AI is overstated, while 87% believe it is not.10

- 65% believe generative AI will have a high or extremely high impact on their organization in the next three to five years, far above every other emerging technology.11

- 78% believe AI will have a high or extremely high impact on innovation.11 43% have already integrated AI-driven product or service changes into capital allocation, and a further 45% intend to in the next 12 months.12

But they also indicate that most CEOs believe their organizations are unprepared and will be challenged to keep pace:

- 60% are still a year or two away from implementing their first generative AI solution.11

- 68% are yet to appoint a central leader or team to coordinate their generative AI efforts, with most saying that their organizations lack critical enablers like talent and governance.11

- 67% either haven’t started or are in the initial stages of evaluating risks and mitigation strategies, amid concerns including inaccuracy, cybersecurity, and data privacy; and only 5% have a robust AI governance program in place.11

Given the profound scale, pace, and uncertainty of the AI revolution, and the overwhelming expanse of opportunities and challenges it presents, it is unsurprising that companies are equally energized and unprepared. Organizations often succumb to inertia or paths of least resistance when faced with disruptive technologies, due to dynamics that Innosight’s co-founder, the late Professor Clayton Christensen of Harvard Business School, identified three decades ago through his pioneering research and subsequently captured in his seminal book, The Innovator’s Dilemma. But while some forward-thinking companies are getting out ahead of the curve, we are only at the very start of the AI era, with winners and losers far from decided. Companies that effectively navigate disruptive change and capture the immense potential of AI for growth and value creation will be those that act boldly and early. This will require leadership teams to foster a shared sense of urgency and conviction to innovate their business models in the absence of perfect information, while creating proprietary insights, embedding strong AI capabilities into their organizations, and deftly managing AI-related uncertainty.

Dall-E 3: A diverse group of executives in a boardroom located high above the city, with a breathtaking cityscape view through smart windows with AI projections.

Our five recommendations for leading into the AI future draw from Innosight’s rich legacy of helping companies create new value and advance the frontiers of their industries through strategic transformations, including digital and AI-enabled ones, as well as patterns and tools of disruptive change our institution has researched, applied, and honed over almost a quarter of a century. The recommendations are not focused on tactical steps like establishing task forces and developing risk mitigation plans, but actions we know to be barrier-breaking and difference-making. Together, they form a blueprint for empowering corporate transformation.

1: Align Leadership on a Foundational Understanding and Common Language of AI

Virtually every transformation enabler, from strategy formulation to resource allocation and culture change, hinges on leadership alignment. It is less of a discrete or standalone enabler and more of a vital thread that must run through every facet of a transformation program, including the other four recommendations we introduce here, for starting to effectively navigate the AI era. For example, even the most comprehensive strategies for operational and customer-facing AI transformation will be of little practical use in the absence of leadership alignment.

The AI Common Language Challenge

Leadership alignment relating to AI must start with a shared foundational understanding and common language of AI, which makes it possible for leaders to engage in coherent conversations without inadvertently talking past each other. Notably, AI’s very nature makes this challenging. Not only is it a complex, technical, and fast evolving domain, but AI’s pervasive reach as a general-purpose technology means executives from different functions—like marketing, HR, and R&D—are increasingly exposed to distinct tools, use cases, and impacts.

This can make them likely to interpret terms and issues differently and to varying extents, often biased by their specific purviews, at the expense of recognizing the true breadth and depth of AI’s implications for the organization as a whole. The presence of many diverse and narrow AI purviews among leadership teams can be analogized by the tale of four blind monks each touching different parts of an elephant —its tusk, trunk, leg, or tail—and discerning either a spear, snake, tree, or rope.

A foundational, nontechnical, shared understanding of terms relating to the following is vital for empowering leadership teams to understand the nature, potential, and challenges of AI:

- Fields of AI: Specific areas of AI that focus on distinct types of problems and techniques to tackle them, such as machine learning, computer vision, natural language processing, and robotics. These fields are distinct but often interplay. For example, the augmented reality feature in the Google Translate app allows a user to point their camera at text on a sign or menu, with the app then using computer vision to detect and recognize the text, natural language processing to translate it, and machine learning to improve translation accuracy over time based on feedback and context.

- Types of AI models: The approaches AI systems use to interpret data, recognize patterns, and make decisions. This includes discriminative models and generative models.

- AI methodologies and processes: The architectures, such as deep learning and neural networks, that form the foundation of AI, along with processes like training and deployment that enable it to function. Familiarity can help explain how and why AI behaves as it does, including sometimes in ways that seem unpredictable and mysterious by making decisions and acquiring capabilities that aren’t always expected or understood, or traceable.

- Ethics and trust: Terms like explainability, AI bias, and alignment, which address the need to ensure AI behaviors and decisions are transparent, equitable, and aligned with desired outcomes.

Leadership teams should adopt common definitions of terms like these in ways that are intuitive, illustrated, and relatable in the context of their industries. A glossary of common terms, provided in the appendix, can serve as a starting point for this.

Understanding Generative and Discriminative Models

To underscore the importance of a common language of AI, consider two fundamental AI models: generative and discriminative. While most leaders are acquainted with generative AI to at least some degree, many are unfamiliar with discriminative AI—a term that has, understandably, on first encounter been interpreted by several executives we have advised to mean AI that exhibits bias. Such unfamiliarity can result in AI strategies with meaningful gaps. Because of their unique ways of learning from and using data, these two types of models are distinct in their abilities to enable immensely powerful use cases, and also entail different types of risks, which leaders deploying them need to understand.

A shared foundational understanding these two important types of AI models can start with intuitive and illustrated definitions, like the following:

Dall-E 3: A split representation of generative AI embodied by a robot artist creating art and discriminative AI characterized by a robot scrutinizing digital patterns with a magnifying tool.

Generative AI models are like artists. They absorb, grasp the essence, and draw inspiration from existing examples, from which they then craft their own novel creations. In chatbots, they learn from massive textual datasets to compose new, relevant responses to prompts and questions. Deepfakes are another example. These systems analyze vast amounts of video footage and then create realistic artificial ones showing events that never happened. Essentially, generative AI models learn patterns in data to “generate” new, original outputs.

- Discriminative AI models are like detectives. They spot clues that let them distinguish between and classify objects. In image recognition, they can tell a cat from a dog by pinpointing specific characteristics of each animal. Similarly, they filter spam by identifying features that are typical of junk emails and atypical of regular ones. Essentially, discriminative AI models learn patterns in data to “discriminate” between objects they are presented with.

Additionally, leadership teams need a broad understanding of the current and emerging capabilities of AI. This should include its capabilities in both automating or augmenting tasks routinely performed today using human intelligence (things humans can do); and performing tasks that are either entirely out of reach of human intelligence alone, or that AI can unlock radical performance leaps in along dimensions like speed, scale, sophistication, accuracy, and cost (things humans cannot do).

Dall-E 3: Wide landscape of a garden maze where, at the center, a leadership team has assembled a clear AI blueprint, signifying alignment and shared understanding.

Regarding the latter, consider for example that over a half-century timeframe, researchers had uncovered the structure of about 190,000 proteins, with single ones having taken them weeks, months, or even years—whereas Google Deepmind announced in 2022 that its AlphaFold model had predicted the structure of almost all proteins known to science, some 200 million, in just 18 months. Similarly, while personalized financial and investment advice has until now been the preserve of those whose wealth affords access to professional advisors, AI has brought the prospect of inexpensive, high-quality, personalized financial advice for everyone within sight.

Gaining this understanding will require leaders to look to examples of where AI is enabling “the art of the possible” far beyond the confines of their own industries, since meaningful parts of the future of AI are already here, but are immensely unevenly distributed. Examples of tasks that generative and discriminative AI can do that humans can and cannot do are shown in Figure 1.

2: Develop Value-Creating Strategies for Operational and Customer-Facing AI Transformation

A foundational understanding of AI is crucial for business leaders to grasp its vast possibilities within their organizations. But the capacity of AI to enable transformation is orders of magnitude greater than that which any organization can resource and assimilate in even a multi-year planning cycle. Leadership teams therefore need to judiciously navigate between the sheer expanse of potential AI use cases and those that will truly drive business performance and customer value, seeing AI as a means to an end and not the end itself.

The CEO of Walmart, Doug McMillon, frames this tension in his own organization by saying that when it comes to applications of AI, “for customer experience, associate experience, efficiency, and forecasting in our supply chain, AI is a big opportunity for us and it frequently feels like we’re only limited by our imagination.” He also acknowledges, “It’s important for us to realize and stay focused on what we’re trying to solve for and not get enamored with any particular technology, whether AI or otherwise.”

Driving value creation through AI will oftentimes require companies to eschew superficial and obvious applications that their peers are trending toward, to instead discover the use cases that will enable meaningful value creation. As one bank CEO expressed to us, “I don’t understand why companies are focusing on chatbots when there’s so much opportunity to understand the customer better and improve products and experiences.”

Leaders should start by comprehensively inventorying AI’s potential business impact across the two broad areas: operational AI transformation, and customer-facing AI transformation. The first of these involves using AI to power processes across virtually every organizational function in ways that unlock not only greater efficiency but effectiveness and even competitive advantage. The second entails using AI to create differentiated customer value by embedding it in existing or new customer-facing products and experiences, within or beyond the existing core business.

Operational AI Transformation

Operational AI transformation involves using AI to automate and augment processes across virtually every organizational function—like strategic planning, R&D, product design, supply chain, operations, finance, HR, IT, legal, marketing & sales, and customer service—to increase both efficiency and effectiveness. For example, in finance, AI is enhancing decision making by improving financial planning and forecasting, evaluating business cases, and enabling increasingly dynamic portfolio capital allocation, while also streamlining administrative tasks in treasury, tax, and audit.

In HR, it is enhancing all parts of the employee lifecycle, including workforce planning and role design to candidate screening, designing compensation and benefits plans, streamlining performance review cycles, identifying and triggering retention interventions for high performers at risk of attrition, and simplifying routine tasks through employee self-service tools. In customer service at Octopus Energy, where AI is doing the work of hundreds of people, CEO Greg Jackson has said that, “Emails written by AI delivered 80 percent customer satisfaction, comfortably better than the 65 percent achieved by skilled, trained people.”

Dall-E 3: A workspace inspired by Nike, where AI software on computers and tablets is used to draft innovative sneaker designs, with prototypes displayed around.

While applications across common processes, like those in finance, HR, and customer service, can indeed create value, the highest impact AI operational transformation applications are those that unlock competitive advantage by targeting the cost and revenue drivers that are central to an industry’s value creation formula.

For instance, fuel costs are a major profit determinant in aviation, and Alaska Air is using AI to chart fuel-efficient flight paths. In e-commerce, 50% of products Amazon sells are marketed to customers through its personalized recommendation engine, contributing to the company’s 40% share of the US e-commerce market, almost six times that of its closest competitor, Walmart. To quote the Chief Product Officer of a consumer goods giant we know that is using generative AI in product design, “The concepts we’ve designed with AI are getting better scores in consumer acceptance tests than those designed by agencies.”

Examples of companies using AI for operational transformation in select business functions are shown in Table 1.

Customer-Facing AI Transformation

Customer-facing AI transformation involves embedding AI into existing or new customer-facing products and experiences to solve customer “jobs to be done,” defined as the progress or goal a customer is seeking to satisfy in a particular circumstance. (By way of example, a customer might “hire” a cup of coffee to solve jobs to be done relating to feeling alert, socializing, or having a morning ritual.) The value at stake is significant; alongside labor productivity and other types of operational efficiencies, 45% of total economic gains from AI by 2030 are expected to come from product enhancements, stimulating consumer demand.13

Customer jobs to be done that a company seeks to solve with AI-powered solutions may be the same as or different to those that its existing solutions address today. For example, a customer might hire Adobe’s Firefly image generative AI to create high-quality and unique marketing collateral at high speed and low cost, or just to express creativity—similarly to why that same customer might previously have hired Adobe Photoshop.

Panera Bread, on the other hand, is exploring AI to produce personalized family meals on demand, tailored to specific dietary and nutritional preferences. This is not to solve the company’s traditional focal job to be done of having a quick and healthy lunch, but rather focused on helping families solve the job to be done of accessing a convenient meal that works for everyone.

Notably, companies should not pursue novel AI-enabled products and experiences just because they are technically possible—in other words, AI for the sake of AI. Instead, companies should prioritize innovations that solve important and high value customer jobs to be done better than existing solutions.

Examples of companies across industries integrating AI into customer-facing products and experiences are shown in Table 2.

Crucially, beyond exploring ways in which AI can enhance existing business models, forward-thinking companies should break free from today’s paradigms and recognize the power of AI to truly reinvent industries. This will require companies to apply an informed understanding of AI’s capabilities and how those capabilities are being applied far beyond their own industry confines—together with a mindset of challenging the status quo—to reimagine their businesses.

In healthcare for example, AI is unlocking step change progress across the current value chain, from drug discovery to diagnostics and surgery. But it is also ushering in a new age of healthcare by simultaneously enabling two long-awaited paradigm shifts—the first from standard drugs prescribed through trial-and-error to one of highly personalized precision medicines, and the second from treating sickness to disease prevention through innovations like remote health monitoring and digital twins.

Similarly, in education, AI is already streamlining and enhancing processes in the traditional paradigm of standard curricula taught en masse, from program and content development to admissions and assessments. But it has also triggered a transformative shift towards truly unique, engaging, and impactful learning experiences—where discriminative AI evaluates an individual’s baseline knowledge, abilities, and motivations, and generative AI then crafts personalized learning goals and customizes every facet of content delivery from timing to format, including immersive virtual reality experiences—to guide students to truly joyful moments of discovery and realizing all that they are capable of learning. In contexts like these, companies that apply AI only to supercharge their existing paradigm business models risk getting left behind.

Sequencing a Roadmap: Table Stakes and Leadership Imperatives

Having inventoried AI’s potential for operational and customer-facing transformation of their businesses, leadership teams should translate this understanding into a sequenced roadmap of initiatives. This roadmap requires strong leadership alignment and should epitomize a living document given the pace and uncertainty of the unfolding AI era, which demands a truly emergent and discovery-driven approach to strategy.

Beyond normal capital allocation criteria for ensuring business impact, prioritization should consider the need to simultaneously pursue both operational and customer-facing AI transformation initiatives right from the start. Not doing so might hinder the organization in fostering learnings and muscles for either embedding AI in business operations or innovating AI customer products and experiences, both of which will be crucial in the AI era. The organization’s current AI maturity and its readiness to manage complex models and use cases should also be considered.

Without experience with simpler AI systems, deploying advanced ones can entail heightened risks. These include potential business interruptions and even reputational damage, especially if these systems behave in ways that are not expected or fully understood in high-profile contexts, such as customer-facing ones—as when Snapchat’s AI chatbot, My AI, caused unease among users by unexpectedly posting an image to its own story before providing various explanations for its actions.

Finally, organizations should also consider those priorities that are most time sensitive. These can take the form of both table stakes and leadership imperatives.

Table Stakes Imperatives

In industries vulnerable to known AI shake-ups, the immediate choice facing companies is to risk being disrupted or not. This may be the result of AI creating burning platforms or becoming table stakes and shaping either the basis of competition or customer expectations, in ways that necessitate either operational transformation or customer-facing transformation with AI.

In terms of operational transformation, the use of AI in drug discovery is rapidly becoming a basic feature in pharmaceuticals. Retail giants are embracing AI to automate and optimize supply chains, in an industry where efficiency is paramount. Similarly, big media companies are fast turning to AI to assist movie and television production amid soaring costs, which for major titles like Indiana Jones and the Dial of Destiny or The Little Mermaid can escalate into the hundreds of millions of dollars, demanding equally massive box office returns just to break even.

Regarding customer-facing transformation, AI-powered customer offerings are already set to become the norm in several industries. In automotive, the race towards AI-powered autonomous vehicles is intensifying, as is the urgency for automakers to navigate the potential knock-on transition from consumer ownership to consumer access of vehicles. Pressure is mounting on education companies like Pearson and Chegg to integrate AI features to offer personalized and engaging learning experiences that improve on those their customers have been self-creating with free tools like ChatGPT. Companies in industries like these that do not keep pace may soon find themselves in the path of disruption.

Leadership Imperatives

Even in industries not yet on the cusp of obvious AI-driven disruptions—but that might soon enough be confronted by unforeseen ones—companies should act ahead of the curve to generate business and customer value while building AI muscles. Acting early can let companies exploit narrow windows of opportunity for developing unique and sticky customer-facing products, where being a first mover allows accumulation of hard-to-replicate capabilities and a critical mass of loyal customers.

To that end, forward-thinking companies are using AI to power innovative customer products and experiences across diverse industries. For instance, in financial services, JPMorgan is developing an AI model to help customers select investments tailored to their specific circumstances and needs. In sports, the NBA’s impressive portfolio of AI initiatives includes innovations like personalized highlight reels to redefine the experience of basketball fans.

The considerations raised here are vital as companies manage their AI portfolios, which should be continually stress-tested against the considerations shown in Figure 2.

3: Make Strategic Choices About AI Data and Models

Performance in AI-driven markets hinges on the strategic choices companies make about AI-enabling capabilities. Many traditional sources of competitive advantage will remain relevant in the AI era. But for AI-enabled strategies, two pivotal sources of competitive advantage are the data used to train a company’s models, and the models themselves.

Crafting a Data Strategy

Alongside computational power, data is one of the two key ingredients for training AI models. During training, models are exposed to data and learn to recognize patterns and features correlated with outcomes in the data. This yields a model that can apply learned patterns to make decisions or predictions when encountering new, unseen data or requests. The quality of an AI model’s output is therefore a direct function of its training data. Models trained on data that embody biases will likely reproduce or even amplify those biases. For instance, Baidu’s generative AI chatbot, Ernie, has proposed that the origin of the COVID-19 virus was lobsters shipped to Wuhan from America. Amazon abandoned its initial foray into using AI to screen job candidates in 2018, following revelations of bias against women.

Dall-E 3: A backdrop of digital clouds alongside sleek servers and illuminated data pathways surround a chessboard, symbolizing the strategic nature of choices about AI models and data.

“Bigger is better” has underpinned recent advancements in AI, with leading models being trained on enormous datasets to support their complexity. For instance, GPT-4 boasts over a trillion parameters—a measure indicative of a model’s complexity and suggestive of the extensive amount of training data it requires. But smaller models trained on meticulously curated, high-quality datasets, can outperform their larger counterparts that have been trained on more expansive but indiscriminate ones. A notable illustration is Tesla’s Full Self-Driving 12 system, which learned to drive by processing billions of frames of video collected from the cars of Tesla drivers. That system was only trained on videos that human labelers, directed by Elon Musk, deemed consistent with the behaviors of “a five-star Uber driver.” Another example of this principle in action is BloombergGPT, which Bloomberg trained from scratch on a mix of proprietary and select public financial data to execute financial tasks suitable for natural language processing, such as sentiment analysis and answering financial questions. Despite only having a small fraction of the parameters of some of the largest language models, it consistently outperforms in its specialized domain.

Given the pivotal role of data in developing AI models, companies should adopt a strategic and intentional approach to data acquisition and management—essentially, formulating a robust data strategy. At the outset, a data strategy requires companies to align their data inputs with the specific outputs they intend to create and their broader business strategy. This involves identifying the types of data required, choosing the most relevant sources for generating or accessing that data, and curating the data. Sources can include:

- Core proprietary data. These are internal data assets unique to the organization, such as customer data, transaction data, and other types of data generated within the company. For example, insurance companies use data on customers’ characteristics, past purchasing patterns, willingness to pay, and previous claims to evaluate risk and price insurance products. Notably, companies can utilize AI to cleanse large and unstructured datasets, enhancing data quality and usability.

- External proprietary data. This is data sourced from external partners or vendors via agreements or partnerships. Credit bureaus like Experian access consumer financial data from lenders to generate credit scores via machine learning and, in turn, sell this information back to lenders to feed into their risk models.

- External non-proprietary data. This refers to data that is publicly available and accessible by any organization, such as government datasets including census and real estate data, and open academic research. For instance, FedEx integrates public weather and traffic data into its machine learning algorithms for optimizing shipping routes.

- Latent data. This refers to data that is available but has not been previously used or analyzed for specific purposes. Harvard Medical School’s AI model that can identify people at the highest risk for pancreatic cancer up to three years before diagnosis, was trained on latent data, specifically, the medical records of nine million patients who did and did not eventually develop pancreatic cancer.

- Synthetic data. Synthetic data is computer-generated information. It is created to model specific conditions or scenarios, and to augment, mitigate bias or gaps in, or replace real-world data. Alphabet’s self-driving technology company, Waymo, uses synthetic data generated through simulations to train its autonomous driving models. These simulations create diverse and challenging scenarios that help improve the model’s performance in real-world conditions.

Each data source and dataset present distinct tradeoffs in terms of relevance, quality, sufficiency, accessibility, cost, compliance, bias, and security. In many AI applications, developing winning models will require companies to leverage data from a variety of sources. For instance, Adobe’s image generative model, Firefly, was trained on a blend of the company’s proprietary stock images, openly licensed content, and out-of-copyright public domain content, thus ensuring comprehensive coverage while avoiding potential copyright infringements and legal claims.

Most companies would benefit from establishing a centrally coordinated data strategy—one that maintains flexibility and avoids constraining the ability of large business units to pursue and leverage unique data assets in service of their specific AI strategies, which may vary from those of other parts of the company.

A centrally coordinated data strategy offers several advantages:

- Innovation synergies. Enables access to data previously held in silos, empowering development of AI solutions that leverage the full potential of the organization’s data assets and promoting cross-functional collaboration and learning.

- Cost and quality gains. Enables scale efficiencies in data acquisition, storage, and processing, reduces redundancies, and facilitates higher standards of data quality.

- Compliance best practices. Ensures uniform policies and security measures, mitigating risks related to cybersecurity, data privacy, and legal and regulatory noncompliance.

AI Models: Choosing to Build, Buy, or Partner

In conjunction with developing a robust data strategy, companies should make informed decisions about whether to construct models in-house, acquire and refine existing models, or seek strategic partnerships. These approaches carry distinct trade-offs and are best suited to specific circumstances and use cases.

Building Proprietary Models

Developing proprietary models, whether purely organically or through AI startup acquisitions, can yield unparalleled levels of control, customization, data security, traceability, and freedom to adapt the model to evolving needs. However, it generally entails substantial financial investment, extended development lead times, and a high level of organizational readiness and digital maturity compared to buying or partnering.

It is therefore generally best reserved for highly strategic AI applications where technology ownership can confer competitive advantage and facilitate organizational learning. For instance, JPMorgan, which employs around 1,500 data scientists and machine learning engineers, has applied to trademark IndexGPT, a model it is developing to help customers select investments tailored to their specific circumstances and needs. Given the immense strategic and financial value inherent to a bank owning an effective and scalable AI financial advisor, opting to build a proprietary model in this scenario is prudent.

Buying And Fine-Tuning Existing Models

Developing proprietary models is not always practical or necessary. Companies can instead adapt technology providers’ existing models to their specific circumstances and use cases. An example of this approach is Salesforce’s Einstein GPT, which is fine-tuned from OpenAI’s foundation models to generate content for marketing, sales, and customer service professionals utilizing proprietary customer data from Salesforce, ensuring personalized and secure AI functionalities distinct from the foundational OpenAI models.

Reliance on third-party models can, though, present two key challenges. First, sustainable differentiation may be compromised if fine-tuning and integration are not carried out in ways that provide a competitive advantage over foundational models or easily replicated “me too” solutions. For instance, Jasper, which uses OpenAI’s technologies to create marketing collateral similar to Einstein GPT, achieved unicorn status with a $1.5 billion valuation during its 2022 series A funding round. But within just a year, it was compelled to enact job cuts and markedly reduce the internal value of its common shares amid slowing growth, attributed to its minimal differentiation from OpenAI’s foundational technologies beneath its user interface, unlike Einstein GPT which leverages proprietary data.

The second challenge relates to ensuring AI behavior is traceable and explainable. This is especially true where AI is informing sensitive and highly consequential decision-making—like healthcare diagnoses or financial risk assessments—in which trust, liability, and regulatory considerations demand transparency. Limitations in transparency can stem from insufficient insights into the nature and appropriateness of a third party model’s original training data, architecture, and training methodologies, obscuring its decision-making. Nonetheless, in many non-sensitive situations, leveraging third-party models can offer speed-to-market and cost advantages, particularly for organizations at an earlier stage of AI maturity.

Strategic Partnerships

Close collaborations with technology companies can offer a middle ground between developing proprietary models and adapting off-the-shelf solutions—especially when there are simultaneous limitations in both a company’s internal capabilities to develop proprietary models at sufficient speed, scale, and sophistication, and in the relevance and adaptability of off-the-shelf solutions.

For instance, British retailer John Lewis has embarked on a $127 million partnership with Google to apply AI across a range of use cases, from boosting workforce efficiency to creating highly personalized consumer shopping experiences, such as computer vision-enabled home design and furnishing. Similarly, a multitude of partnerships form a pivotal component of Pfizer’s AI strategy, enabling the company to exploit AI at far greater breadth and depth than it could independently.

Table 3 provides a summary of the key criteria and associated assessment questions for approaching build, buy, or partner decisions.

Choices relating to data and models demand meticulous consideration. Historical disruptions, like media outlets freely sharing their content with technology companies in the early days of the internet, serve as cautionary tales. Given the rapid evolution of the AI landscape, companies must maintain a thoughtful perspective on what data sources and model solutions make sense both now and in the future.

Leaders must allocate adequate time to deliberate on their strategic options, while avoiding unnecessary delay or inaction on AI. Education company Pearson, which has already started integrating AI into its customer-facing products, exemplifies this. Regarding proposals from various AI companies seeking to train large language models on the company’s expansive educational content, Andy Bird, Pearson’s former CEO stated, “I don’t want to just take the first offer that comes along. We want to be very thoughtful and specific as to what we get out of this versus what a third-party gets out of this. The space itself is moving at a highly fast pace, so being first for announcing a deal for the sake of being first…in hindsight might not be a great idea.”

Dall-E 3: A balance scale with a factory symbolizing the creation of in-house AI models on the left, and shopping carts and a glowing handshake that represent buying existing AI models and partnerships on the right.

4: Implement Organizational, Culture, and Talent Enablers of AI Transformation

Isolated experiments of the type that many companies have started pursuing will yield valuable insights in the early stages of the AI era. But crafting and executing holistic, value-maximizing AI strategies will require distinct organizational enablers. Such enablers—like AI-specific innovation processes, portfolio management and resource allocation systems, risk and governance frameworks, and even strategic planning cycles—are manyfold and interdependent. Foundational are the organizational structures, culture, and talent for AI.

Leadership and Organizational Structure for AI

While most companies have yet to designate a senior executive to lead AI, some forward-thinking ones have done so. Coca-Cola has appointed a Global Head of Generative AI, Walmart has assigned responsibility for AI to its Chief Technology Officer, and the U.S. Department of Defense has appointed a Chief Digital and AI Officer. Crucially, these leaders must be afforded the authority and resources required to shape and implement AI strategies, both from the corporate center and in collaboration with business units, which some Chief Innovation Officers and Chief Digital Officers of the past two decades have not always enjoyed.

Since joining Microsoft in 2017 as its companywide Chief Technology Officer, a position that, along with a well-resourced Office of the CTO, was created specifically for him, Kevin Scott has had full autonomy over Microsoft’s research division and AI program. This has empowered him to propel Microsoft from lagging rival technology giants like Google and Meta on AI to being on the forefront of the industry in just a few years.

Scott’s agenda has included architecting Microsoft’s multi-billion-dollar investments in and partnership with OpenAI, a move that countered his company’s formerly insular culture that favored in-house ideas, and instituting “Capacity Councils” to allocate scarce AI computational resources to the most commercially promising initiatives. This allowed him to rein in a sprawling array of pet projects, much to the displeasure of some employees who left the company as a result.

Alongside empowered senior leadership, companies should also consider which organizational construct will best enable their strategies. Archetypes include the following:

- Centralized AI: In this model, a centralized team drives AI initiatives for both the enterprise and its constituent business units. This approach can be well suited to organizations in the initial stages of their AI journeys, or those with smaller business units with requirements that can best be served by a single team with greater capabilities than might be feasible to cultivate in each business unit.

- Decentralized AI: Here, AI capabilities are distributed among business units. This model is advantageous to diversified organizations with large, distinct business units that demand unique AI strategies and capabilities. Light coordination can ensure coherence of initiatives and sharing of learnings across the enterprise while avoiding any duplication of effort.

- Hub and spoke: This model integrates a centralized structure, housing common AI assets—like data, computation, and advanced technical know-how—that are leveraged by decentralized teams developing solutions specific to their business units. This balances central coordination with divisional autonomy, unlocking resource synergies and fostering collaboration and shared learning.

Culture Enablers of AI Strategy

It is a truism that “culture eats strategy for breakfast,” as Peter Drucker famously said. But culture can prevent the right strategy from even being born in the first place, long before it has a chance to be eaten. Entrenched behaviors and beliefs can prevent leadership teams from performing the two essential tasks of strategy: specifically choosing priorities, and allocating resources to deliver them.

It is natural for leaders, especially those running businesses whose success formulas have been largely stable during their tenures, to be caught off guard by disruptions lurking around corners—or to not fully grasp the possibilities for transformation presented by a technology as powerful and rapidly evolving as AI. But leaders who remain entrenched in established industry logics and cite their company’s historical success formula as reasons why they are insulated from disruptive change often find themselves left behind.

For proof of this, look no further than the legacy automakers, who as little as seven years ago equated the rise of Tesla to a hype cycle while arguing that their long-established scale, automaking know-how, and up and downstream ecosystems would eventually see them blow past the company, which is now the leading automaker by yardsticks from market capitalization to electrification infrastructure and autonomous vehicle technology. Assertions like “AI cannot replace how we do this for our customers” or “AI-powered business models can’t overcome barriers to entry in our industry,” should be challenged in the face of a sea-change technology that is already immensely powerful and is acquiring capabilities at breakneck speed.

Dall-E 3: In an office breakfast nook, professionals gather around a giant bowl filled with a mix of cereal and glowing AI circuit boards, symbolizing culture eating AI strategy for breakfast.

Another culture-related failure mode involves substituting strategic choices about which AI initiatives to pursue with conviction, with either inertia (otherwise known as “let’s monitor it”) or a sprawling portfolio of minor initiatives that each get only a smattering of resources. Such compensating behaviors can stem from cultural dynamics within leadership teams, including discomfort with ambiguity, conflict avoidance, and risk aversion. In the case of AI, these can be even more pronounced due to its general-purpose profile, which engenders endless use cases and disruptive threats and opportunities, and its highly uncertain nature. This necessitates adaptive capacity and experimentation but does not avert the need to place meaningful bets in the absence of perfect information about the future and ahead of faster-moving competitors.

Microsoft is one company whose pursuit of AI has been unlocked by cultural transformation, orchestrated by its CEO, Satya Nadella. It transitioned the company’s culture from being insular, R&D-centric, conservative, and conflict-avoidant—resulting in thinly spread resources across pet projects, years of underperformance and a late arrival to opportunities like the mobile revolution—to a culture focused on growth, empowered portfolio decision-making, risk tolerance, and customer centricity. This enabled the curtailing of AI research projects that were disconnected from business outcomes, and refocusing of resources on major AI priorities.

In the words of Microsoft’s CTO Kevin Scott, “this is not a research endeavor… We are trying to build things that are useful for other people to use… It’s just been clear as day that you have to pick the things that you think are going to be successful and give those things the resources to be successful every day.”

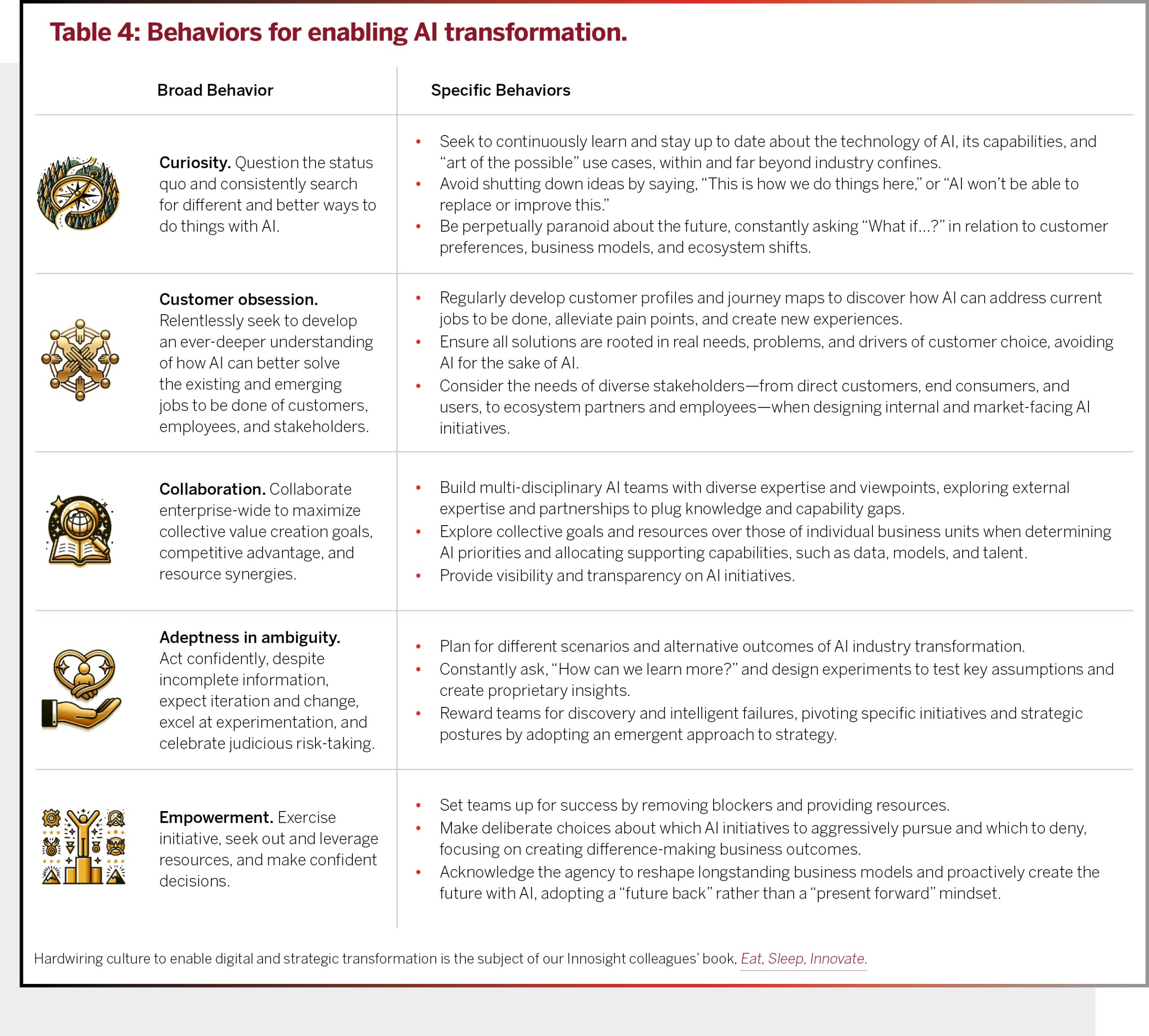

Hardwiring five behaviors can empower leaders to develop and pursue winning AI strategies: curiosity, customer obsession, collaboration, adeptness in ambiguity, and empowerment, as further detailed in Table 4.

From Amazon to JPMorgan and John Deere, companies leading with AI and capturing its upside potential across industries embody these behaviors. The journey to adopting them will differ among organizations, depending on their specific blockers. These blockers, which can be deeply rooted in the organization’s subconscious, must first be identified as part of a deliberate process of AI “culture by design.”

Crucially, the five broad behaviors should not only be embraced and role-modeled by leaders but should also cascade down and be hardwired through to AI strategy teams, and more broadly, talent throughout the organization that will be exposed to AI changes.

AI Talent and Talent Change Management

Companies must confront two major talent priorities in the AI era. First, they will need to arm themselves with AI-specific talent to deliver their strategies. Second, they will also need to systematically manage change throughout the workforce as AI gets woven into the organizational fabric.

Cultivating AI Talent

For most companies, a major hurdle is a lack of AI talent, which is a scarce resource. For instance, it is estimated that merely a few thousand individuals in the U.S. have the capabilities to develop a fully bespoke generative AI model. Demand for AI talent is, unsurprisingly, intensifying. A striking 2.1%. of all current U.S. job postings are for roles requiring skills in at least one of “natural language processing,” “neural networks,” “machine learning,” or “robotics.”14 Companies as diverse as Walmart, Procter & Gamble, Goldman Sachs, Netflix, and commercial real estate titan JLL, are offering mid-to-high six figure compensation packages as they vie to fill roles like Machine Learning Platform Product Manager, Senior Manager of Generative AI, and Vice President of Artificial Intelligence.

A company’s talent strategy should align with and facilitate its AI transformation priorities and technological choices. The depth and diversity of skills needed will vary substantially, particularly when comparing the internal implementation of an off-the-shelf solution to developing a proprietary, customer-facing model for a unique use case that could yield a genuine competitive advantage.

AI teams will need to blend skills found in conventional innovation teams—like those of product managers, domain experts, business analysts, and user experience designers—with specialized roles. These include:

- AI engineers: Roles in this category include machine learning engineers, who formulate predictive models; robotics engineers, tasked with integrating AI algorithms into robotic systems; and conversational designers, who craft conversational flows to ensure smooth and effective interactions with chatbots.

- AI data scientists: These roles focus on managing, processing, and utilizing data for AI. This includes designing data requirements, securing the availability of data, curating and annotating data to enhance a model’s predictive accuracy and reliability, and using data to train AI models.

- AI ethics, risk, and compliance professionals: This category encompasses a range of roles dedicated to ensuring AI adheres to legal, ethical, and regulatory standards. Priorities include mitigating potential biases and ensuring fairness, transparency, and accountability in AI applications, navigating evolving policy and regulatory environments, safeguarding AI systems, and managing risks associated with their use.

Companies will need to cultivate talent through some combination of effectively competing on AI talent markets by offering attractive compensation packages and designing roles that afford autonomy, mastery, and purpose; internal training programs to upskill high-potential employees (for instance, Accenture is partly building its AI talent bench through internal training programs); and acquiring AI startups as a tactic to scoop up talent, a strategy being pursued by companies like ServiceNow.

Broader Talent Change Management

Every employee will encounter AI automation and augmentation sooner or later and to varying degrees, mirroring the ubiquitous impact of digital technologies since the advent of the PC and internet.

The potential is immense. Analysis by Morgan Stanley estimates that AI will affect 44% of the workforce and have a $4.1 trillion economic effect over the next three years alone through task automation and augmentation.15 A recent National Bureau of Economic Research working paper estimates that generative AI can automate 27% to 41% of labor time across industries,16 as depicted in Figure 3. Estimates incorporating all existing forms of AI and technology suggest that work activities that currently occupy 60% to 70% of employees’ time could be automated.5 This comes against a backdrop of soaring labor costs and demand that consistently exceeds supply, while American worker productivity experiences its steepest decline in 75 years.

The potential is immense. Analysis by Morgan Stanley estimates that AI will affect 44% of the workforce and have a $4.1 trillion economic effect over the next three years alone through task automation and augmentation.15 A recent National Bureau of Economic Research working paper estimates that generative AI can automate 27% to 41% of labor time across industries,16 as depicted in Figure 3. Estimates incorporating all existing forms of AI and technology suggest that work activities that currently occupy 60% to 70% of employees’ time could be automated.5 This comes against a backdrop of soaring labor costs and demand that consistently exceeds supply, while American worker productivity experiences its steepest decline in 75 years.

But without adept change management as AI intertwines with the workforce, the potential benefits of AI to companies will, at best, be muted. At worst, organizations may expose themselves to a range of downsides, from technology misuse to the disenfranchisement of employees who feel perceived as interchangeable with algorithms.

To successfully navigate the talent implications of both operational and customer-facing AI initiatives, companies will need to address the following questions:

Which populations and roles are affected? Comprehensive assessment necessitates consideration of roles both directly and indirectly impacted by AI, accounting for business model interdependencies and spillover effects. For example, implementing AI-based demand forecasting directly influences supply chain analysts and inventory planners, whose work and decision-making will be automated and augmented through direct AI interaction. The effects also reverberate through adjacent roles: procurement officers may need to adjust their supplier relationship strategies, while operations staff navigate changes in the frequency, volume, and nature of shipments and handling requirements.

How will AI impact employees? The implications of AI on employees can be varied and profound. AI can automate or augment, at the level of individual tasks or entire roles. It can empower employees to immerse themselves in aspects of their work that offer autonomy, mastery, and purpose, or it can evoke feelings of disenfranchisement and fear. These effects can be complex and contradictory. For instance, a recent MIT research study found that the use of generative AI by professional writers enhanced both productivity and performance, as well as concurrently elevating excitement about job enhancement and anxieties about job replacement.17 Even in these early stages of AI integration, forward-thinking leaders, while enthusiastic about AI’s potential to enhance workforce productivity and innovation, are becoming increasingly attuned to its potential negative impacts. 40% believe AI could diminish employees’ social interactions and connections, while a third anticipate a rise in mental health issues due to fears of job loss and uncertainty about the future.11 Relatedly, compared to company leaders, frontline employees are far less likely to be optimistic and far more likely to be concerned about AI, as Figure 4 shows.

Notably, the application of AI in HR and workforce management can not only mitigate potential drawbacks of AI, but meaningfully enhance employee value propositions and journeys. For instance, it can enhance well-being through workload management and personalized support, unlock new forms of collaboration through advanced tools and virtual team environments, and enable more personalized and continuous employee feedback that fosters development. But understanding both the positive and negative impacts of each AI implementation, using considerations like those in Table 5 as a guide, is crucial.

What specific interactions between employees and AI maximize benefits and minimize backlash? Each use case necessitates a granular view of how employees and AI interact and collaborate to produce the best outcomes. Without this, companies inadvertently expose themselves to nuanced and concealed risks, including improper use and overreliance. For instance, a Harvard Business School study of the use of AI in hiring found that recruiters using high-quality AI for candidate screening spent less time evaluating resumes and were more prone to defaulting to candidates recommended by the AI, compared to recruiters using low-quality AI. Consequently, they overlooked top candidates and made worse decisions compared with recruiters using low-quality or no AI. When AI enables good outcomes, employees can be less incentivized to exert effort and stay attentive, deferring to it instead of leveraging it as a performance-enhancing tool. Such “falling asleep at the wheel” has been observed repeatedly across settings and can lead not only to bad outcomes in the immediate term but also the atrophying of skills, knowledge, and judgement that are being exercised less but are still vital to the organization.

What change management is required? Training is a crucial element of this. For instance, employees utilizing generative AI will need guidance on how to integrate it into their workflows, and to learn specific skills like prompt engineering. Most employees, though—86% according to one recent survey18—report a lack of training on AI changes. IT giant Wipro has bucked this trend through workshops on AI fundamentals for its entire global staff of 250,000 while providing more specialized training for certain roles. However, training is just one of several change management necessities, especially considering the broad and deep implications of AI across all workforce issues, from the designs of roles and teams to metrics, incentives, and culture. Change must be orchestrated through an iterative “test & learn” approach, which, alongside training, should encompass communications, stakeholder engagement, risk management, and monitoring and evaluation.

Dall-E 3: Dall-E 3: A tree of knowledge adorned with tools representing various professions interspersed with circuit patterns, representing the impact of AI on jobs.

Beyond immediate, individual implementations, AI compels companies to reimagine the role of human capital as a strategic asset and enabler of competitive advantage. Both existing blue-collar and white-collar roles are already being supplanted by AI. For example, Amazon has deployed robots that navigate warehouses alongside employees and have the dexterity to pick individual products. The U.K.-based telecoms giant BT plans to replace 10,000 jobs with AI through 2030, including a substantial number of customer service roles. One global consumer goods giant we have advised has reduced its insights team headcount by 40% after introducing AI, which not only delivers lower-cost consumer insights but fundamentally better ones. As intelligent agents and robots integrate with the workforce, enterprise talent strategies will require a reset.

5: Systematically Manage AI-Related Uncertainty

Leaders rightly focus considerable attention on AI risks. Addressing anticipated challenges—like inaccuracy, cybersecurity, and data privacy—that are top of mind and unresolved among a majority of CEOs and companies, is critical. But it is also only table stakes. What will set apart companies in creating value in the era of AI is their adeptness in managing bigger picture AI-related uncertainty and ambiguity. AI presents a rare example of what our colleague Patrick Viguerie termed a “Level 4” uncertainty, in his iconic 1997 Harvard Business Review article, “Strategy Under Uncertainty.” The highest level of strategic uncertainty, Level 4, is where “multiple dimensions of uncertainty interact to create an environment that is virtually impossible to predict. The range of scenarios cannot be identified, let alone scenarios within that range. It might not even be possible to identify, much less predict, all the relevant variables that will define the future.”

Sizing the AI Uncertainty

The outcomes of all general-purpose technologies are unpredictable. When the internal combustion engine was invented, few could have predicted its impact on urban design, global trade and travel, geopolitics and conflicts over oil, global warming and respiratory health, and the birth and boom of industries from rubber to drive-thru restaurants. Even the most forward-thinkers in the mid 1990s could not have foreseen how the PC and the internet would give rise to social media and influencer culture, the gig economy, sweeping data privacy concerns, streaming and the decline of traditional media, remote work, fake news, online dating, and youth mental health challenges. Humans entrenched in current paradigms struggle to imagine alternative futures shaped by disruptive technologies, let alone predict them accurately. In attempting to, companies have repeatedly either missed the boat—like Western Union with the telephone, AT&T with cellular, and Nokia with the smartphone—or leapt off the dock onto one that barely set sail, like Iridium did when it bet big on satellite phones replacing cellular in the 1990s.

Even compared to past general-purpose technologies, AI’s implications for industries and society are uniquely uncertain. Stephen Hawking framed this in 2017, when he said, “AI could be the biggest event in the history of our civilization. Or the worst. We just don’t know. So we cannot know if we will be infinitely helped by AI, or ignored by it and side-lined, or conceivably destroyed by it.” Since then, those closest to AI have with increasing frequency and seriousness touted the potential for outcomes as extreme and antithetical as utopia and dystopia—whereby AI could replace human toil and scarcity with untold material abundance, profound scientific discovery, ecological splendor, and far longer and healthier lifespans—or induce an Orwellian world of mass unemployment, never-before-seen levels of inequality and discrimination, the dissolution of truth and democracy, undermining of the nation state, terrifying new weapons, human enfeeblement (think Wall-E), and even extinction.

The acute uncertainty AI poses arises from three interrelated and intrinsic characteristics:

- Modern AI is not just another tool, but the emergence of a potent non-human intelligence with truly boundless possibilities. It is in various stages of solving several of humanity’s grand challenges, from protein folding to nuclear fusion and climate change. It has already been applied to unscramble human brainwaves to do everything from reconstructing images, thoughts, and music, to restoring walking and speech in paralyzed individuals, with a researcher behind one of those efforts remarking that this could eventually end the use of cellphones to communicate, and that instead, “We can just think.” Even among general-purpose technologies, it is uniquely omni-use and far reaching.

- AI is at least partially auto-enabling and self-fulfilling. It is helping advance its own development, which is happening at increasingly breakneck speed, by generating datasets, designing enhanced AI processors, and training new AI models. The limits of this upward spiral are unknown.

- AI has a tendency to acquire capabilities and exhibit behaviors and decisions that are not always expected or explainable. Emergent capabilities like logical reasoning, for example, have arrived far ahead of expectations, and to the surprise and even bewilderment of some of the field’s most important pioneers, like Geoffrey Hinton.

AI has repeatedly surprised its pioneers in the pace and direction of its development. Mustafa Suleyman, who co-founded both DeepMind and Inflection AI, states in his book, The Coming Wave, “The speed and power of this new revolution have been surprising even to those of us closest to its cutting edge.” Sam Altman, CEO of OpenAI, has noted that, contrary to his and many others’ predictions that AI would first impact blue-collar jobs, then white-collar, and lastly creative jobs, it appears the reverse is playing out, with creative jobs like those in the gaming industry being among the most affected so far.

Consider that, only five years before the 2022 launch of ChatGPT, Google researchers published the first paper on transformers, the ‘T’ in GPT, Generative Pre-trained Transformer. Also, in 2017, MIT physicist and AI researcher Max Tegmark published his book, Life 3.0, which stated, “Deep-learning systems are thus taking baby steps toward passing the famous Turing test, where a machine has to converse well enough in writing to trick a person into thinking that it too is human. Language-processing AI still has a long way to go, though.” Predictions of the AI future aggregated by the online forecasting platform Metaculus, from whether there will be human-machine intelligence parity before 2040 to the timing of a potential AI catastrophe and even when most Americans will personally know someone who has dated an AI, continue to fluctuate significantly, though are generally trending towards sooner rather than later.

AI uncertainty is already causing twists and turns in the expectations and fortunes of industries and companies within them. It is not long ago that analysts were prophesizing the death of Adobe’s image products following the emergence of tools like DALL-E 2 and Midjourney. But Adobe’s hundreds of millions of stock photos let it train and release its own image generative AI in March 2023. Six months subsequent to this release, the company’s share price was up by 50%.

Beyond the direct implications of AI for specific industries, the unfolding AI era will also require companies to become adept in managing more systemic uncertainties. These range from the potential for deepfakes to undermine elections and cause political instability, to financial crises induced by the use of AI in trading. SEC Chair Gary Gensler has warned about such dangers, suggesting that the increasing adoption of deep learning in finance could escalate systemic risks. The trillion-dollar “Flash Crash” of May 6, 2010, serves as a stark illustration of such risks. That brief yet chaotic event saw shares of major companies like Procter & Gamble swing in price between $0.01 and $100,000, due to unanticipated flaws in automated trading programs.

Tactics for Managing AI Uncertainty

Faced with uncertainty of great magnitude, leaders and organizations can understandably become paralyzed, not knowing what success looks like, let alone what actions to take to realize it. But the winners are rarely those who wait and watch events unfold around them. More often, they are those who proactively manage uncertainty, create proprietary insights, and make bold moves in the absence of publicly available data about the future, which is only available once it has been created by faster-moving competitors, whose success constrains the freedom to act. We call this phenomenon the information-action paradox, which is depicted in Figure 5.

Companies should aspire to navigate and capture the upsides of AI uncertainty by employing the principles we outline below, embodying the art and science of managing uncertainty.

1. Frame key uncertainty drivers and maintain a fact base. Many complex and intertwined variables will shape the AI future. Across industries, these include the speed and direction of AI technology and regulatory developments, to the impact of AI on everything from employment and consumer trust to global power dynamics. Companies should identify both the broad and industry-specific variables that ought to influence their AI strategies, determine what is currently known, what is discoverable, and what is for now unknowable against each of them, and continuously update their understanding of these factors to inform decision-making.

2. Develop a handful of competing scenarios based on the most critical uncertainties. The fact that even many of the individual variables that will define the AI future are as yet unknown or poorly defined makes it practically impossible to model a set of scenarios that are collectively and individually complete. But maintaining a handful of plausible competing scenarios that are only as complete as they can be in the current state, and simulating war games across them, can help companies identify actions to maximize opportunities and minimize risks.

3. Apply an emergent approach to strategy. Companies should craft an AI transformation roadmap and make informed strategic choices about AI models and data. Absent this, companies risk inertia or a scattershot approach to AI. But it is vital for strategic choices to be dynamically reviewed and pivoted in response to internally generated learnings, like those about customer engagement with AI products, and external developments, like technological and regulatory shifts, which should be tracked through a “watchtower” approach. Adaptive capacity in strategic planning broadly will be vital even given the potential of AI to cause disruptive systemic shocks, as outlined earlier.

Crucially, companies should carefully balance the urgency to act boldly with the risk of prematurely making path-limiting or hard-to-reverse strategic moves, in particular based on prophecies and speculations about the AI future that may well be plausible but are entirely unproven and unreliable.

Those relating to AI’s implications on jobs, for example, vary from doom to boom, with subscribers to those diametrically opposed outcomes both using equally valid logical arguments, historical analogies, and emerging data points to support their predictions. Jobs boomers, for example, argue that AI will create jobs that haven’t even been imagined yet and point to research like that from MIT economist David Autor, which shows that 60% of current U.S. jobs had not yet been “invented” in 1940 and more than 85% of employment growth over the last 80 years is explained by technology-driven creation of previously unimagined new occupations, from e-commerce order-fulfillment to software development.19 Jobs doomers meanwhile argue that human intelligence has been central to employment, and that mechanical minds can make humans redundant just as mechanical muscles did to horses by gradually replacing them in tasks like plowing soil, turning mine-shaft pumps, moving goods, and transporting passengers such that the U.S. population of horses fell from 26 million in 1915 to three million in 1960. Such outcomes are merely extreme simplifications of the real possibilities for the implications of AI on jobs, where all specific scenarios though plausible are unlikely.

4. Make innovation and learning a discipline. The best way for organizations to understand the capabilities, behaviors, and implications of AI is to innovate and experiment with it in hands-on ways. LinkedIn, for example, is experimenting by embedding AI features across its portfolio, encompassing professional networking, job search and recruiting, marketing and sales, and educational offerings. Similarly, while positioned in an industry that ranks among the earliest adopters of AI and concurrently making several substantial bets with the technology, JPMorgan currently has more than 300 AI use cases in production for risk, prospecting, marketing, customer experience, and fraud prevention.

Studying the patterns of past disruptive technologies, and staying abreast of AI developments within and far beyond the organization’s immediate domains, can also help enable a rigorous learning culture, as can making sure leaders share a basic technical understanding and common language of AI.

While the magnitude of uncertainty posed by AI can challenge leadership teams, it also creates opportunities with disproportionate upsides for those able to navigate it effectively.

Dall-E 3: A Rubik’s cube where each color represents a different AI scenario and various AI and technology symbols on the cube’s faces.

Conclusion

Leaders should not see AI merely as another tool, but rather embrace it as a revolution poised to reshape every industry and aspect of how we live and work more profoundly than anything witnessed in our lifetimes. AI technologies, already immensely capable with an endless number of powerful use cases, are advancing at a rapid pace and will continue to do so in unforeseeable ways. Our five recommendations will only become more important in the foreseeable future. Together, they provide a blueprint for empowering leaders to navigate disruptive change and lead into the age of AI.

About the Authors

Glossary of Common AI Terms

Levels of AI

- Artificial intelligence (AI): A field of computer science dedicated to creating systems capable of performing tasks that usually require human intelligence, such as visual perception and decision-making.